The Cloud-based platform to provide B2B managed Wi-Fi services

It works with your favorite hardware option

The Cloud-based platform to provide B2B managed Wi-Fi services

It works with your favorite hardware option

Perfect to offer B2B

managed Wi-Fi services

MSP

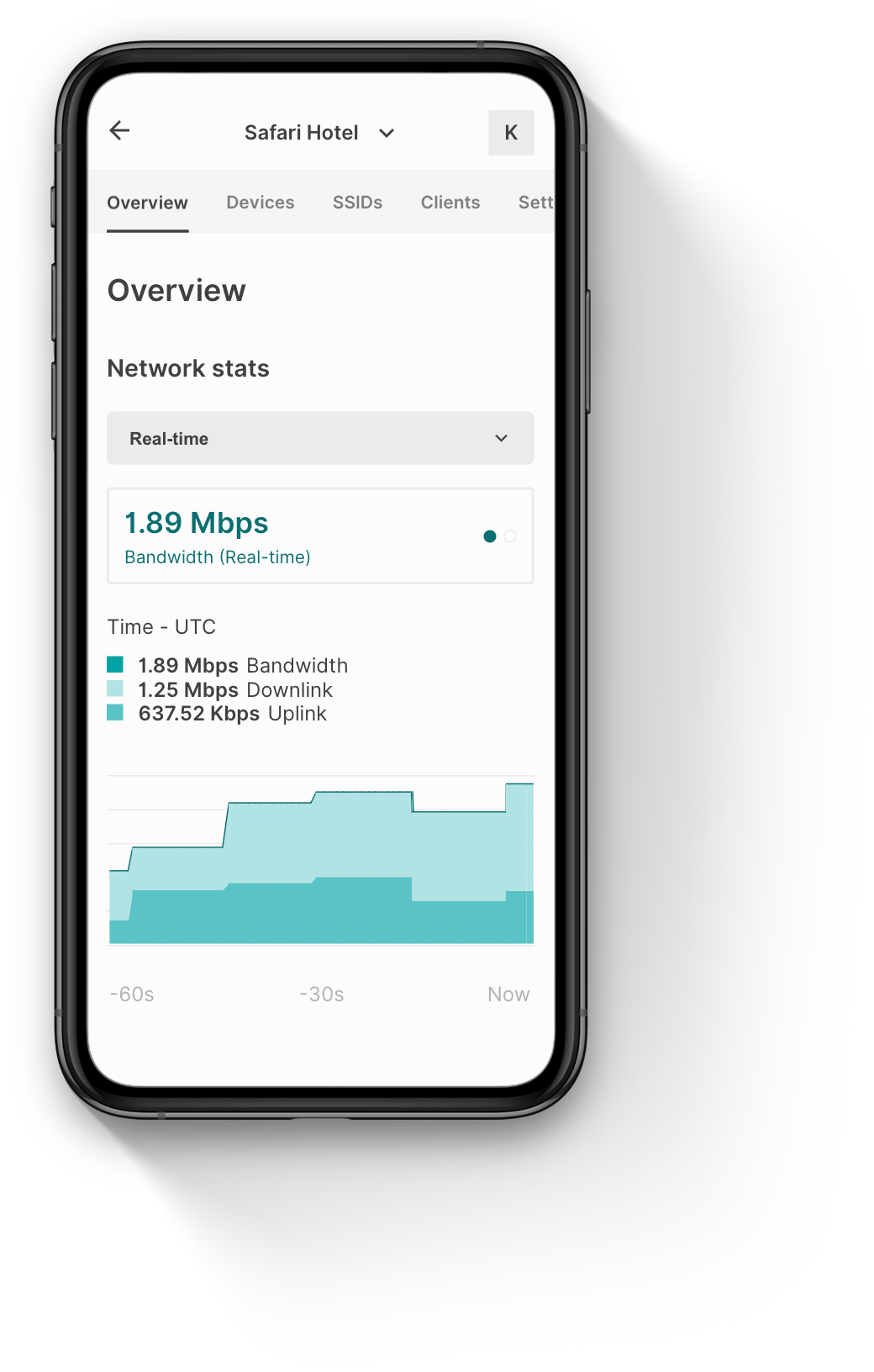

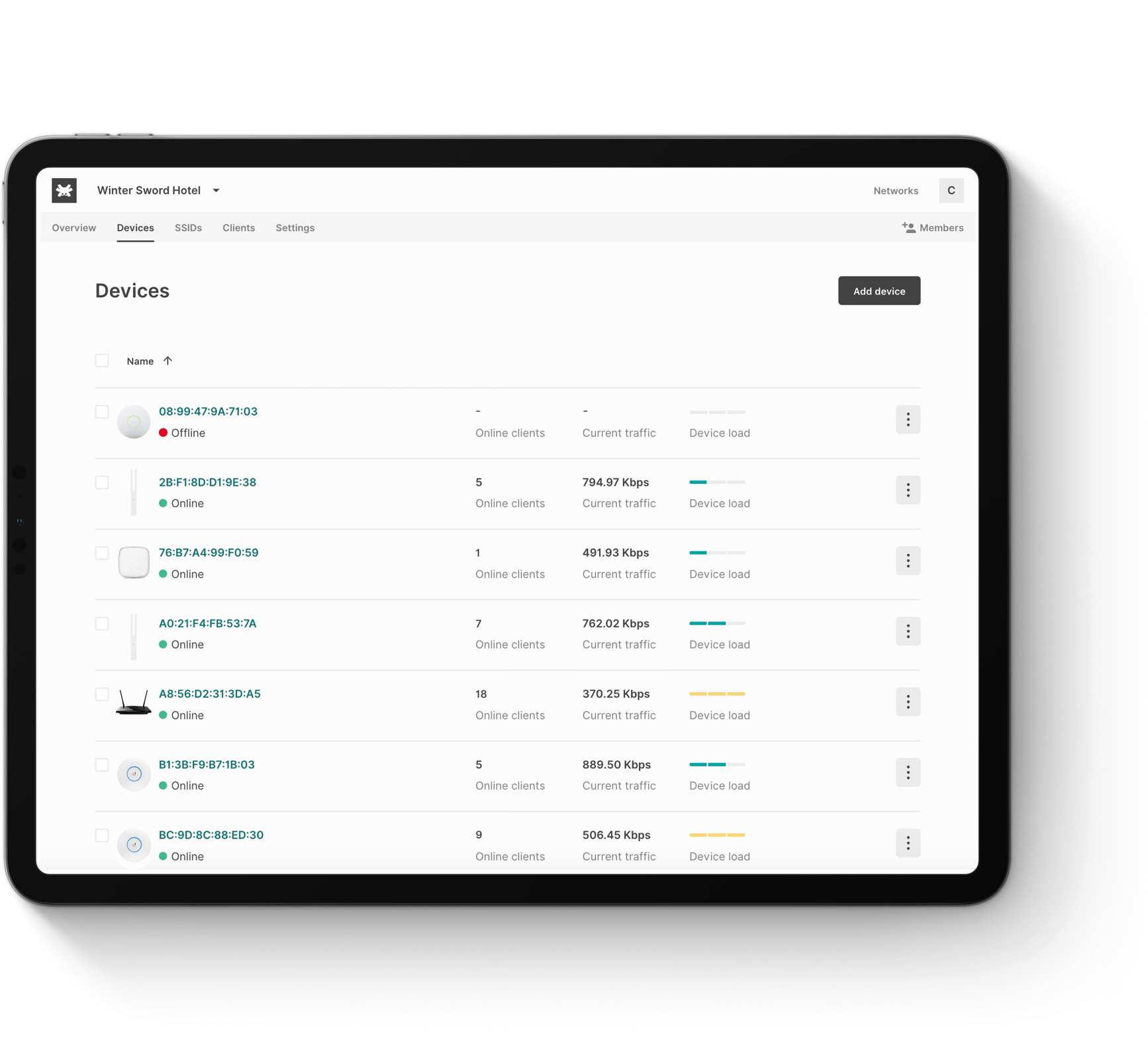

Operational efficiency

All sites meanageble via the same centralized dashboard

Software flexibility

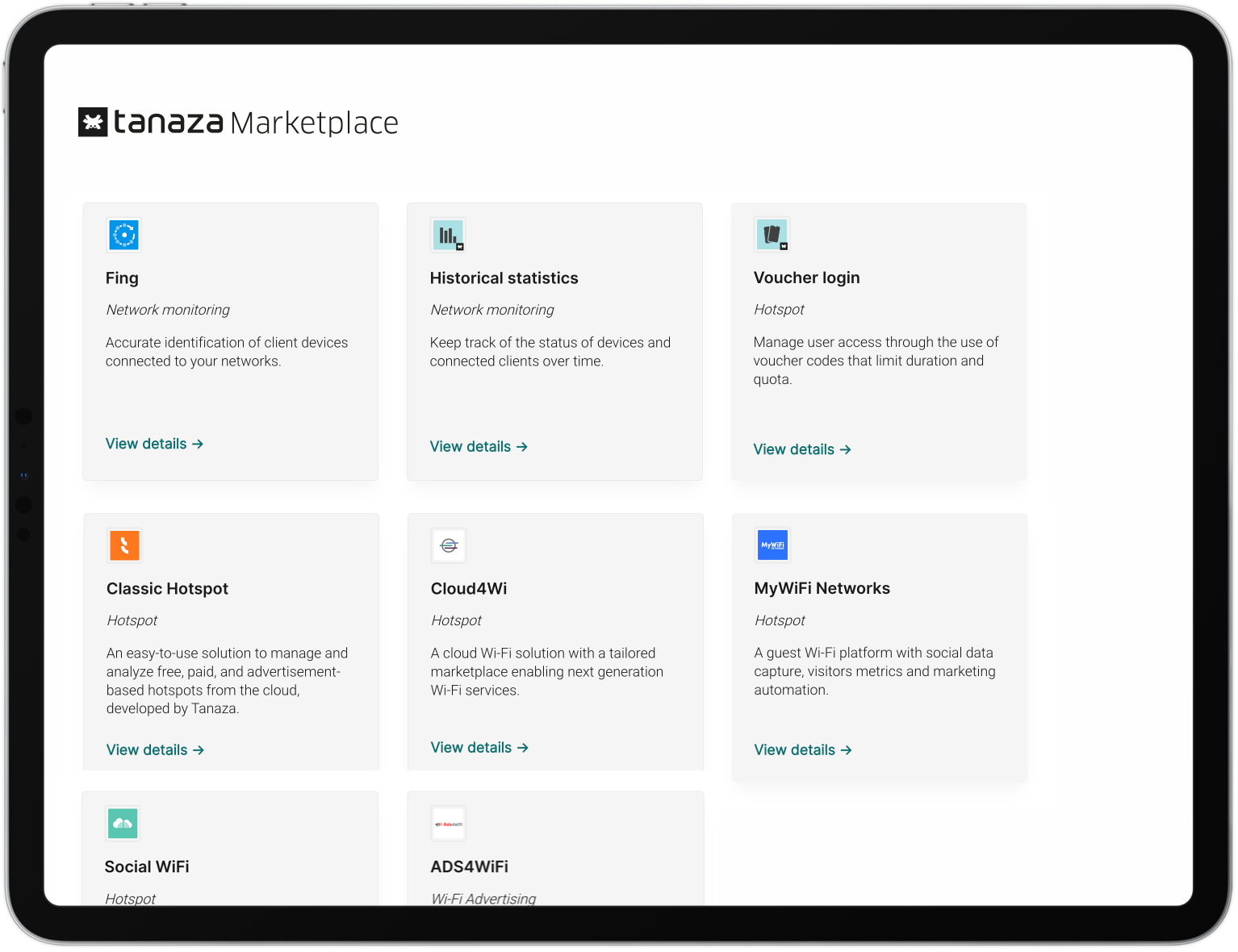

Works with many Apps to meet any customer’s use case

ISP

Virtualization and automation

Via APIs that abstract the hardware layer

Agile at scale

A cloud-native Wi-Fi Access Control with SDN APIs that integrate with NVF Orchestrators

A software that works with a broad list of hardware options

Tanaza works with a wide range of white-box and off-the-shelf Wi-Fi access points and Wi-Fi routers, including high-performing or cost-effective ones, used for indoor/outdoor, desktop or ceiling-mountable deployments with POE.

From Edgecore to Mikrotik to Ubiquiti access points, we got you coved.